We used to have to teach computers the rules of language. Now, now they’re learning to understand language on their own.

To translate text, it’s not enough to swap phrases from A to B. It’s crucial to understand the grammatical rules of any given language and be aware of cultural norms of the area in which that language is spoken.

Translation must also be fast, error-free, and consistent. The challenge is balancing accuracy and speed, as well as automation and human intervention.

Over the history of machine translation, it’s been largely based on rules and statistics that, at times, have been clumsy and unnatural when it comes to capturing natural language.

Modern translation systems are based on efficient machine learning and deep learning engines that have been developed, trained, and refined over time. Thanks to artificial intelligence algorithms and neural networks, machine translation has reached a near-human level of accuracy.

There are three types of computer-aided translation systems:

- Rule-based machine translation (RBMT)

- Statistical machine translation (SMT)

- Neural machine translation (NMT)

In this article, we’ll take a closer look at all three and assess how you can implement machine translation to benefit your business.

Rule-Based Translation

Rule-based machine translation (RBMT) is based on programmed information that dictates how a word or phrase in the source language should read in the target language.

For example, an English word is added and the RBMT system outputs the best German word based on morphological, syntactic, and semantic analysis of both the source and the target languages involved in a translation task.

Here’s what we mean by those three deciding factors:

- Morphological: What is the form or structure of the sentence?

- Syntactic: What are the language rules that apply?

- Semantic: What’s the basic meaning of the sentence?

RBMT requires a full vocabulary and language rules to function properly. So, to produce a German translation of this English sentence, you need:

- a dictionary that will map each English word to an appropriate German word

- rules representing regular English sentence structure

- rules representing regular German sentence structure

Since language is dynamic and evolves over time, the efficacy of RBMT is limited and it certainly lives up to its “machine” translation moniker based on a lack of on-the-fly adaptability.

Statistical Machine Translation

Statistical machine translation (SMT) analyzes existing translations developed by humans (referred to as bilingual text corpora).

Whereas RBMT is a word-based approach, SMT systems are built on phrase-based systems. Instead of moving forward word by word, SMT can string them together into the likeliest phrase from bilingual text corpora.

Extensive analysis of bilingual text corpora (both the source and target languages) and monolingual corpora (the target language) generates statistical models that translate text from one language to another.

It’s basically what fueled the advent of online translation tools like Babel Fish Altavista and Google Translate, although the latter has since pivoted to neural machine translation.

Introduced in the mid-1990s, Babel Fish could automatically translate text into several languages. The program was freely available online and brought machine translation to the masses.

These programs made use of statistical machine translation, translating the source material based on the most common previous translations that have been previously done. They’re not without their flaws, though.

Like RBMT, SMT’s general weakness is that it can only translate a phrase if it exists in the reference texts.

Neural Machine Translation

Neural machine translation (NMT) differs from its rule- and stat-based precursors in having an ability to learn from each translation task and improve upon each subsequent translation.

NMT can recognize patterns in the source material to determine a context-based interpretation that can predict the likelihood of a sequence of words.

NMT is also known as deep learning. It’s a breakthrough because it does not have to be supervised by humans. It involves creating large neural networks that allow the system to learn and operate independently.

The linear logic used by traditional computers is replaced by a neural net method modelled on the human brain. The software learns and perfects after each new experience.

Machine Translation in Action

In 2006, Google launched its machine translation service. They built off a rules-based approach that, as we discussed, required a lot of work by linguists to define vocabularies and grammars.

Google’s approach was to feed the computer with billions of words of text, both monolingual text as well as examples of human translations between languages. They then applied statistical learning techniques to build the phrase-based translation model.

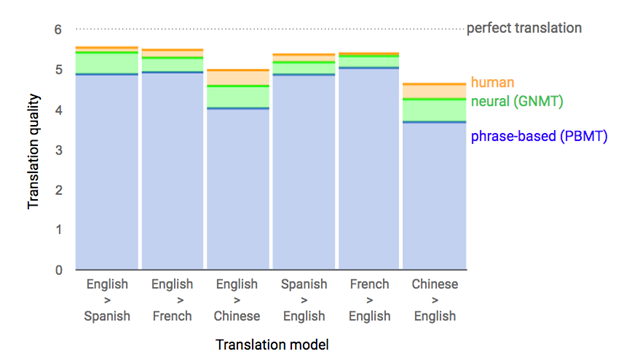

10 years later, they introduced the Google Neural Machine Translation system (GNMT), which demonstrated marked improvements in machine translation quality. Again, whereas phrase-based machine translation (PBMT) deconstructs a sentence into words and small phrases to be translated largely independently, NMT can consider the context of the entire input sentence as a unit for translation.

Google was able to make NMT work on very large data sets to build a system that is sufficiently fast and accurate enough to provide better translations for Google’s users.

However, even GNMT lags human translation when it comes to achieving the best possible results.

Courtesy: Google AI Blog

We’re not yet at the point where translation can be automated with 100% accuracy, meaning a human touch is still quite necessary.

Let’s say, for example, you want to localize your e-commerce website with a specific target market in mind. You wouldn’t simply copy and paste a blog post into Google Translate and publish it in German.

Not only would it be clunky to read, but some of the messaging might also get lost in translation and is some cases you might end up saying the wrong thing entirely.

You might be able to get away with it when translating a slogan or product name, but marketing material directed towards international markets must be hyper focus on each market’s needs.

While strides have been made in machine translation for e-commerce, content should appear by all intents and purposes to be generated locally.

If you want to run a multilingual website, be sure that your content management system has multilingual capabilities.

Where we do see RBMT and SMT is in industries that require very precise translation but can rely on certain specific words and phrases, like:

- Medicine

- Law

- Agriculture

- Construction

- Automotive

Here, human translators can make use of computer-assisted models. These tools allow for smooth and efficient translation based on previously translated texts and programmable translation glossaries—thematic dictionaries assembled through previous translation projects.

Technical translators will consistently use the correct terms prompted automatically by the software.

By avoiding unnecessary changes in the source text and applying approved glossaries and style guides, you can benefit from long-term benefits such as quality assurance and consistency, and save some money at the same time.

Can machine learning, even deep machine learning, ever fully replace a human being in the translation process? Who knows, but right now it seems all three of these approaches still must be well balanced with the right intervention to deliver the right results.

In the end, translations based on neural machine networks perceive the task and consider the context. As a result, these translations are often much more natural than those based on rules or probabilities.

We Have the Right Language Solution for You

Rule-based, stat-based, and neural machine translation can transform the way you do business around the world.

The translation and localization experts here at Summa Linguae Technologies help companies like yours with efficient and practical innovations in multilingual communication.

We can tailor machine translation and post-edited machine translation solutions to meet your specific needs and help your business grow in all your target markets.