Wondering which chatbot platform is easiest to build and train? We did some digging for you.

Amazon Lex vs. Google Dialogflow vs. IBM Watson vs. Rasa: Which chatbot platform is easiest to configure?

Depending on your experience with machine learning, programming, and chatbots, the answer will vary.

We’ve experimented with the most popular chatbot platforms on the market.

This article paints a picture of how easy (or difficult) it is to configure a chatbot with almost zero experience. Let’s get into it.

3 Points of Comparison When Choosing a Chatbot Platform

If you’re a novice trying to decide on the simplest chatbot platform to use, read the summaries below or follow along by downloading the full whitepaper.

1. Documentation

Reading the documentation for each platform was simple enough to follow, and each one of them provided tutorials.

We spent an average of 1 hour reading the documentation and terminologies for each platform.

Rasa had the most interactive documentation out of the entire group. Rasa allows users to play with the Python code in the Get Started guide, which followed a story-like framework.

Although Rasa had the most entertaining documentation, IBM Watson and Google Dialogflow seemed to have the more thorough yet simple resources to follow.

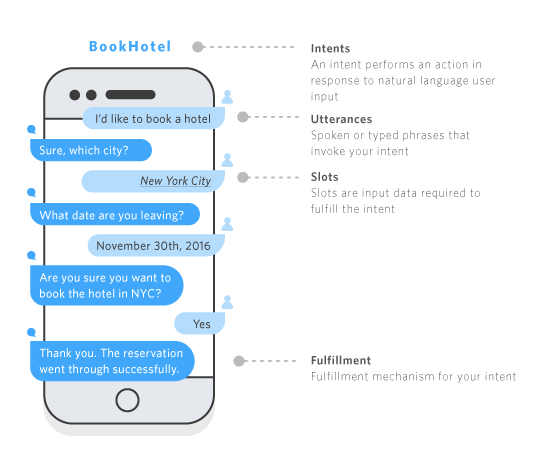

Reading Amazon Lex’s documentation felt like the reader would need to have previous experience with chatbots and machine learning. The image below shows how a chatbot works on Amazon Lex, but the rest of the documentation wasn’t as visual.

Comparing IBM Watson’s documentation and Google Dialogflow resources were closer in terms of user experience.

Both gave a great explanation of how their platforms worked. Google Dialogflow, however, explained difficult concepts with high-quality visual aids and videos. These videos and the simple wording in the documentation gave us the foundation to understand all the other platforms with ease.

Documentation Winner: For someone who wants to start learning about chatbots, Google Dialogflow’s documentation wins.

2. Configuration

Comparing the configuration of each chatbot platform was more difficult. Each one provided a different experience, and each one had its quirks.

One thing was for sure: the Rasa chatbot was more difficult to configure on the fly. It wasn’t as simple as Dialogflow, Lex, or Watson, but we can appreciate that it still made configuring a chatbot with Python an easy (and fun) experience.

Among Amazon Lex, Google Dialogflow, and IBM Watson platforms, Amazon and IBM did a great job of providing examples that we could take and modify for our use case.

It took 2 hours to configure Amazon Lex, and just over 1.5 hours to fully configure IBM Watson.

Why did IBM Watson take only 1.5 hours?

Watson provided enough examples of user intents, entities, and natural language data for our chatbot to be fully configured to our needs.

In fact, we dedicated another half an hour to configure our IBM Watson bot a little more.

It felt so much more intuitive configuring IBM Watson versus Google Dialogflow.

Amazon Lex falls between the two in terms of the configuration experience.

Configuration Winner: The IBM Watson conversational agent takes the cake in our configuration experience.

3. Testing

Testing the chatbots we configured allowed us to detect the elements we needed to work on, as well as any glaring mistakes.

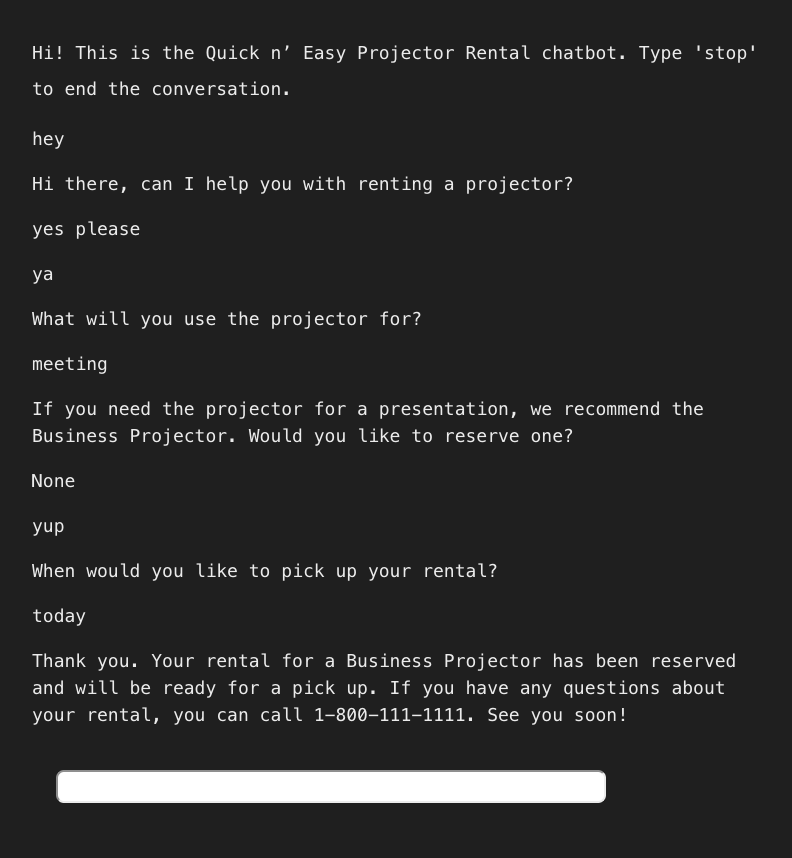

Let’s be honest here – testing with the Rasa chatbot was not as easy as the others. It was so much more satisfying though. There’s something about the more primitive look of the chat dialog that gave us multiple “Eureka!” and “Aha!” moments.

When it came down to testing our work in real-time, Google, IBM, and Amazon’s platforms were a lot more intuitive. The three platforms had conversational agents that could be trained and tested after each change or adjustment.

All of them looked pretty in their own way, and each one would give us relevant error messages that pointed to where we misconfigured the bot.

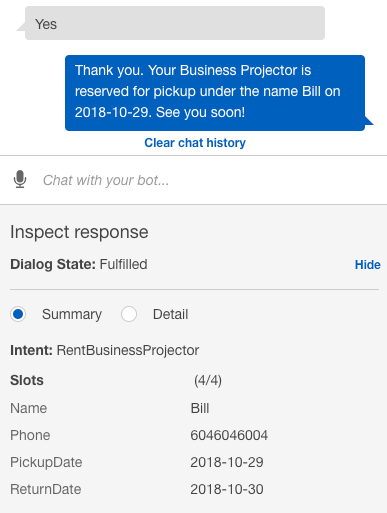

IBM Watson had a tiny edge by labeling each response and command with intents and entities. This gave us the knowledge to understand our dialog and how the bot comprehends our commands.

Google Dialogflow and Amazon Lex had more similar experiences as both platforms used a real-time conversation box to show our bot’s training progress.

The real-time conversation box reflected what the chatbot would look like using their platform, but Amazon Lex did a better job of labeling all variables in the responses.

For example, in the image below, the intent and slots were labeled to show what the chatbot “understood” out of the conversation or command.

This was great, but what if we want to share this testing experience with others?

Example: Our Google Dialogflow Chatbot

This is where Google Dialogflow had an edge. If you are a novice chatbot builder, you’re likely to seek feedback from real-life humans that understand these chatbot platforms better.

In the case of testing your Google Dialogflow agent with others, it’s entirely possible. There’s an option to share a link to the chatbot or embed the chatbot so that others may use it or test it immediately.

Each platform had its unique strengths in the testing phase. There was no clear winner here because each platform may have a different appeal to individuals.

For example, the IBM interface provides a ton of information we can work with, but the interface might feel less familiar than Google Dialogflow and Amazon Lex.

The testing of each platform would depend on the user’s skill level as well.

More advanced users would gravitate towards different platforms depending on their particular needs.

For beginners, all that really differed was the look of the testing environment and how the intent or entity information was presented.

Testing Winner: From a testing standpoint, it was a tie between Google Dialogflow, Amazon Lex, and IBM Watson.

Chatbot Platform Comparison: Overall Winner

Which platform made it easiest to train and build a conversational agent?

It was a very close decision. Although it took more time and resources to train Google Dialogflow, we did end up with a great end result. On the other hand, Amazon Lex provided a sample we could configure right away: a chatbot for a flower shop.

This made the configuration process simple and easy to follow along. It took only 2 hours to configure the Lex chatbot before it understood a user’s requests and commands related to projector rentals.

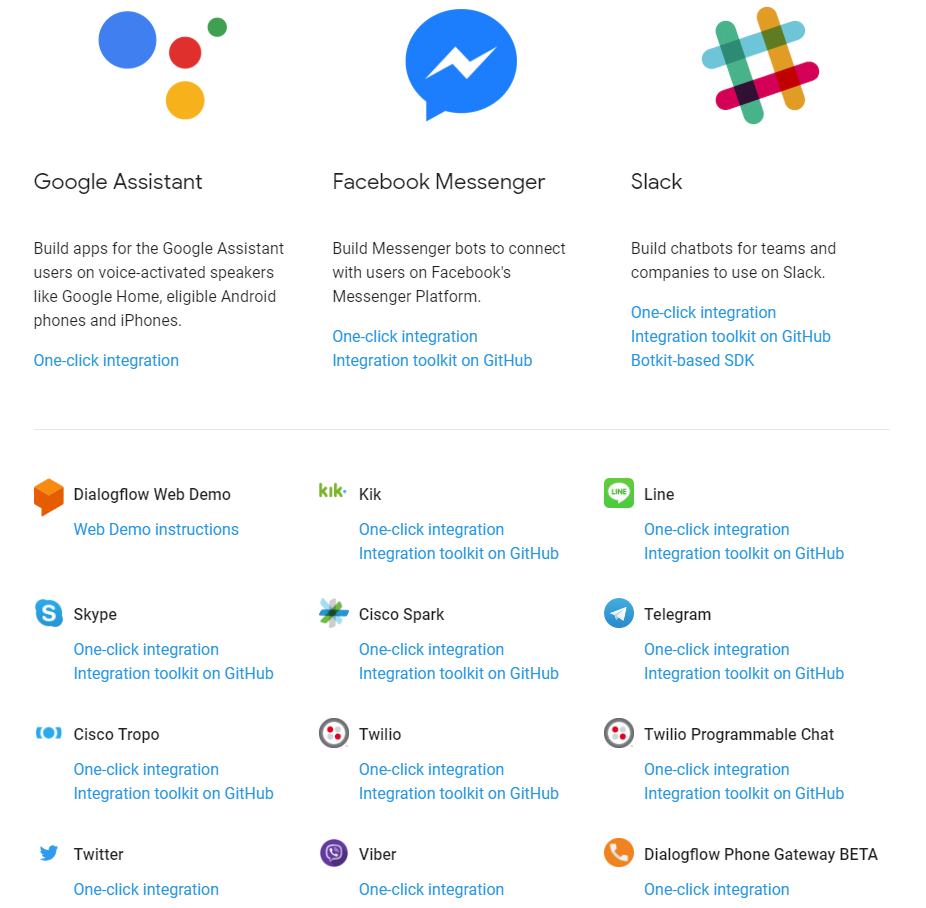

If we were to use the chatbot in the real world, Google Dialogflow looks like it is the easiest to integrate with messaging platforms among the pack because of the number of one-click integrations that are supported.

You can roll out your Dialogflow bot with the click of a button.

Overall Winner: Because of our overall experience with building a complete and fully functional agent, we crowned Amazon Lex as our winner.

Did we factor in user error? In the complete version of the experiment, there might have been a few user errors that you noticed.

For example, intents and entities may have been configured inefficiently.

Example utterances and prompts may have been too excessive or not thorough enough.

There are certainly areas where human error could have skewed the results, but this is all a part of the overall experience.

What did we learn about configuring a chatbot?

Truth be told, the big three chatbot platforms: Amazon, Google, and IBM were all very similar in experience. Rasa was the only chatbot platform that was tested which required some hands-on Python coding.

Someone without any prior hands-on experience in coding, chatbots, and machine learning can still build conversational agents with a little time investment.

The chatbot in our example may not have been developed to work in real life scenarios since it only responds to a single case, but they could be built further to work in real situations and use cases.

For the theoretical business, Quick n’ Easy Projector Rentals, building a chatbot was surprisingly simple.

We picked Amazon Lex as it had the most user-friendly experience. Now that we’ve picked Amazon Lex as a winner, does that mean it is the go-to platform?

This experiment taught us that the most powerful chatbot platform is the one that successfully satisfies your unique use case with as little effort as possible.

It will depend on the user’s technical capabilities, experience with the overall platform (i.e. if you do work on AWS already, Lex will probably feel the most natural), and the complexity of the needed agent.

Each chatbot platform had its own advantages and drawbacks.

What was most important to us was how these chatbots handled user intents, entities, and other variables.

What we learned was that it wasn’t the chatbot platform or knowledge in coding that limited our results. It was the lack of user intents, entities, and other variables that can be fed to the platform’s algorithms so that it could learn how to communicate better.

If we spent more time with each chatbot platform, then the end result for each would be a lot more fleshed out.

Download the Chatbot Platform Comparison Whitepaper

Want to see how we configured Amazon Lex, Google Dialogflow, IBM Watson, and Rasa?

Download the full study: You can follow the entire experiment in this white paper that shows the steps we took to map out our intents, entities, and other variables.

Developing a multilingual chatbot?

Summa Linguae Technologies’ multilingual chatbot localization services will help you with translation, data transcription, and localization QA in 30+ languages.