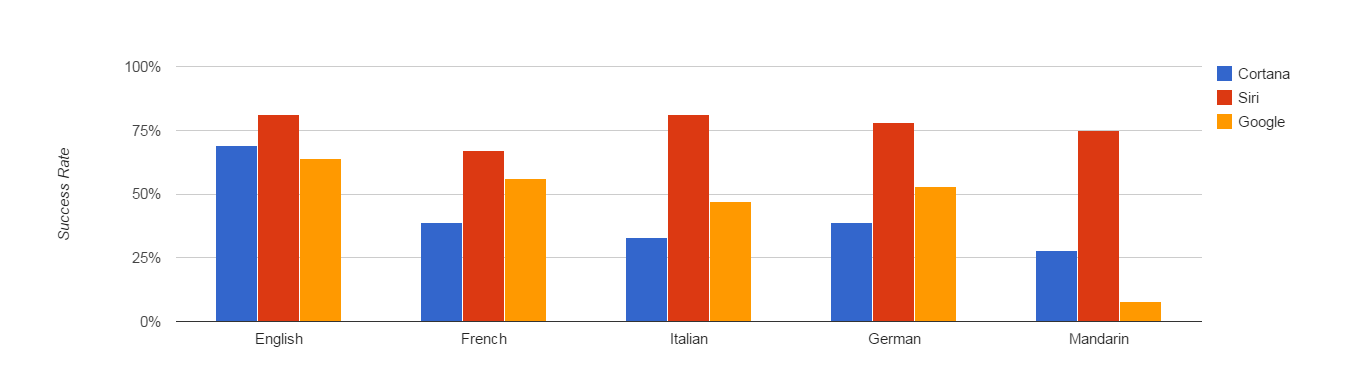

Last weekend we did a guest post for VentureBeat assessing voice assistants in English and comparing Cortana, Google Now, and Siri in foreign languages – French, Italian, German and Mandarin.

We received a number of requests asking us about our methodology. So, we’re sharing our specific test cases and results here.

Our test cases consisted of eighteen questions that users would be likely to ask for from a personal voice assistant. All requests were executed through voice commands and were targeted to test the usefulness of the assistant (rather than accuracy in pure dictation or search).

Defaulting to search for a query which could have generated a direct response (i.e. “call the nearest Chinese restaurant”) was penalized.

Query Results by Language

Scroll through the slideshow below to see detailed results for each language.

We scored each question on a scale of 1.0, 0.5 and 0.0. Questions that scored a full pass (1.0) were successfully executed by the voice assistant returning a direct response.

In contrast, a 0.5 rating was given to questions that displayed the right answer in a web search query.

0.0 ratings were given to inaccurate answers or to questions which the voice assistant could not successfully execute.

See below for an accuracy comparison across all five languages.

If you’re interested in learning more about Siri in foreign languages and localizing speech technology, check out how we collect multilingual data and conduct speech recognition testing.